* v2 Login screen (#254) * adding gradients for modal and sidebar * adding font setup * redesigned login screen for MultiUserAuth * completed multi user mode login screen * linting * login screen for single user auth redesign complete * created reusable gradient for login screen --------- Co-authored-by: timothycarambat <rambat1010@gmail.com> * v2 sidebar (#262) * adding gradients for modal and sidebar * adding font setup * redesigned login screen for MultiUserAuth * completed multi user mode login screen * linting * login screen for single user auth redesign complete * WIP sidebar redesign * created reusable gradient for login screen * remove dark mode items * update new workspace button * completed sidebar for desktop view * add interactivity states --------- Co-authored-by: timothycarambat <rambat1010@gmail.com> * remove duplicated pkg * v2 settings (#264) * adding gradients for modal and sidebar * adding font setup * redesigned login screen for MultiUserAuth * completed multi user mode login screen * linting * login screen for single user auth redesign complete * WIP sidebar redesign * created reusable gradient for login screen * remove dark mode items * update new workspace button * completed sidebar for desktop view * WIP added colors/gradients to admin settings * WIP fix discord logo import * WIP settings redesign - added routes for general settings and restyled components * WIP settings for LLM Preference, VectorDB, ExportImport * settings menu UI complete WIP functionality * settings fully functional/removed dark mode logo * linting * removing unneeded dependency * Fix admin sidebar visibility Fix API Keys location and work with single/mum Fix Appearance location - WIP on funcitonality * update api key page * fix permissions for appearance * Single user mode fixes * fix multi user mode enabled * fix import export * Rename AdminSidebar to SettingsSidebar * Fix mobile sidebar links --------- Co-authored-by: timothycarambat <rambat1010@gmail.com> * V2 user logout (#265) * Add user logout button * hide other 3 dot button * wrap admin routes * V2 workspace modal (#267) Update new workspace modal remove duplicate tailwind colors * v2 Settings modal styles (#266) * EditUserModal styles complete * workspaces modals styles complete * create invite link modal styles complete * create new api key modal styles complete --------- Co-authored-by: timothycarambat <rambat1010@gmail.com> * v2 Chats Redesign (#270) * fix default message for new workspace * prompt input box ui redesign complete * ui tweak to prompt input * WIP chat msg redesign * chat container and historical chat messages redesign * manage workspace modal appears when clicking upload a document on empty workspace * fixed loading skeleton styles * citations redesign complete * restyle pending chat and prompt reply components * default chat messages styles updated * linting * update how chats are returned --------- Co-authored-by: timothycarambat <rambat1010@gmail.com> * Onboarding modal flow for first time setup (#274) * WIP onboarding modal flow * onboarding flow complete and private route redirection for onboarding setep * redirect to home on onboarding complete * add onboarding redirect using paths.onboarding() * Apply changes to auth flow, onboarding determination, and flows * remove formref --------- Co-authored-by: timothycarambat <rambat1010@gmail.com> * v2 document picker (#275) * remove unneeded comments * WIP document picker UI * WIP basic UI complete for document picker tab and settings tab * linting * settings menu complete, document row WIP * WIP document picker loading from localFiles * WIP file picker logic * refactoring document picker to work with backend * WIP refactoring document picker * WIP refactor document picker to work with backend * file uploading with dropzone working * WIP deleting file when not embedded * WIP embeddings * WIP embedding with temp button and hardcoded paths * WIP placeholder for WorkspaceDirectory component * WIP WorkspaceDirectory * WIP * sort workspaceDocs and availibleDocs complete * added directories util * add and remove document from ws working * v2 document picker complete * reference modal ui bug fixes * truncate function bug fix * ManageWorkspace modal bug fixes * blocking mobile users modal for workspace settings * mobile ui fixes * linting * ui padding fixes * citation bug fixes * code review changes * debounce handlers * change tempFile object to array * selection count fix * Convert workspace modal to div Memo workspace settings update conditional rendering of workspace settings * Show no documents --------- Co-authored-by: timothycarambat <rambat1010@gmail.com> * mobile sidebar styles * padding on Mobile view mobile sidebar items * UI touchup * suggestion implementations * CSS fixes and animation perfomance change to GPU accelerated and 60fps * change will-change * remove transitions from onboarding modals, simplify on-change handlers * Swap onboarding to memoized components and debounce onchange handlers * remove console log * remove Avenir font --------- Co-authored-by: Sean Hatfield <seanhatfield5@gmail.com> |

||

|---|---|---|

| .vscode | ||

| cloud-deployments | ||

| collector | ||

| docker | ||

| frontend | ||

| images | ||

| server | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .nvmrc | ||

| clean.sh | ||

| LICENSE | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

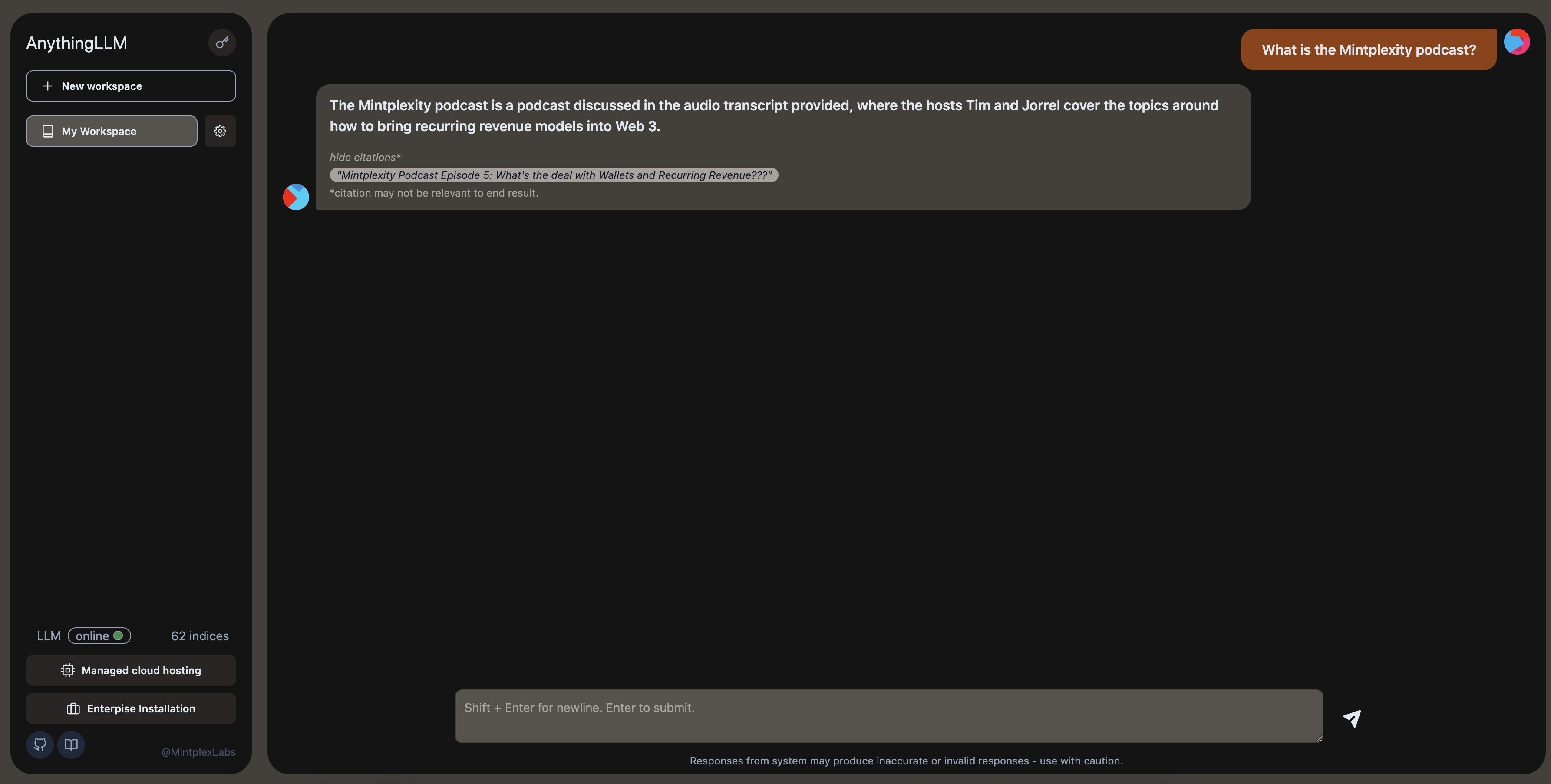

AnythingLLM: A business-compliant document chatbot.

A hyper-efficient and open-source enterprise-ready document chatbot solution for all.

A full-stack application that enables you to turn any document, resource, or piece of content into context that any LLM can use as references during chatting. This application allows you to pick and choose which LLM or Vector Database you want to use. Currently this project supports Pinecone, ChromaDB & more for vector storage and OpenAI for LLM/chatting.

Watch the demo!

Product Overview

AnythingLLM aims to be a full-stack application where you can use commercial off-the-shelf LLMs or popular open source LLMs and vectorDB solutions.

Anything LLM is a full-stack product that you can run locally as well as host remotely and be able to chat intelligently with any documents you provide it.

AnythingLLM divides your documents into objects called workspaces. A Workspace functions a lot like a thread, but with the addition of containerization of your documents. Workspaces can share documents, but they do not talk to each other so you can keep your context for each workspace clean.

Some cool features of AnythingLLM

- Multi-user instance support and oversight

- Atomically manage documents in your vector database from a simple UI

- Two chat modes

conversationandquery. Conversation retains previous questions and amendments. Query is simple QA against your documents - Each chat response contains a citation that is linked to the original content

- Simple technology stack for fast iteration

- 100% Cloud deployment ready.

- "Bring your own LLM" model. still in progress - openai support only currently

- Extremely efficient cost-saving measures for managing very large documents. You'll never pay to embed a massive document or transcript more than once. 90% more cost effective than other document chatbot solutions.

- Full Developer API for custom integrations!

Technical Overview

This monorepo consists of three main sections:

collector: Python tools that enable you to quickly convert online resources or local documents into LLM useable format.frontend: A viteJS + React frontend that you can run to easily create and manage all your content the LLM can use.server: A nodeJS + express server to handle all the interactions and do all the vectorDB management and LLM interactions.

Requirements

yarnandnodeon your machinepython3.9+ for running scripts incollector/.- access to an LLM like

GPT-3.5,GPT-4. - (optional) a vector database like Pinecone, qDrant, Weaviate, or Chroma*. *AnythingLLM by default uses a built-in vector db called LanceDB.

How to get started (Docker - simple setup)

Get up and running in minutes with Docker

How to get started (Development environment)

yarn setupfrom the project root directory.- This will fill in the required

.envfiles you'll need in each of the application sections. Go fill those out before proceeding or else things won't work right.

- This will fill in the required

cd frontend && yarn install && cd ../server && yarn installfrom the project root directory.

To boot the server locally (run commands from root of repo):

- ensure

server/.env.developmentis set and filled out.yarn dev:server

To boot the frontend locally (run commands from root of repo):

- ensure

frontend/.envis set and filled out. - ensure

VITE_API_BASE="http://localhost:3001/api"yarn dev:frontend

Next, you will need some content to embed. This could be a Youtube Channel, Medium articles, local text files, word documents, and the list goes on. This is where you will use the collector/ part of the repo.

Go set up and run collector scripts

Contributing

- create issue

- create PR with branch name format of

<issue number>-<short name> - yee haw let's merge

Telemetry

AnythingLLM by Mintplex Labs Inc contains a telemetry feature that collects anonymous usage information.

Why?

We use this information to help us understand how AnythingLLM is used, to help us prioritize work on new features and bug fixes, and to help us improve AnythingLLM's performance and stability.

Opting out

Set DISABLE_TELEMETRY in your server or docker .env settings to "true" to opt out of telemetry.

DISABLE_TELEMETRY="true"

What do you explicitly track?

We will only track usage details that help us make product and roadmap decisions, specifically:

- Version of your installation

- When a document is added or removed. No information about the document. Just that the event occurred. This gives us an idea of use.

- Type of vector database in use. Let's us know which vector database provider is the most used to prioritize changes when updates arrive for that provider.

- Type of LLM in use. Let's us know the most popular choice and prioritize changes when updates arrive for that provider.

- Chat is sent. This is the most regular "event" and gives us an idea of the daily-activity of this project across all installations. Again, only the event is sent - we have no information on the nature or content of the chat itself.

You can verify these claims by finding all locations Telemetry.sendTelemetry is called. Additionally these events are written to the output log so you can also see the specific data which was sent - if enabled. No IP or other identifying information is collected. The Telemetry provider is PostHog - an open-source telemetry collection service.