|

|

||

|---|---|---|

| .vscode | ||

| cloud-deployments | ||

| collector | ||

| docker | ||

| frontend | ||

| images | ||

| server | ||

| .dockerignore | ||

| .gitattributes | ||

| .gitignore | ||

| .nvmrc | ||

| clean.sh | ||

| LICENSE | ||

| package.json | ||

| README.md | ||

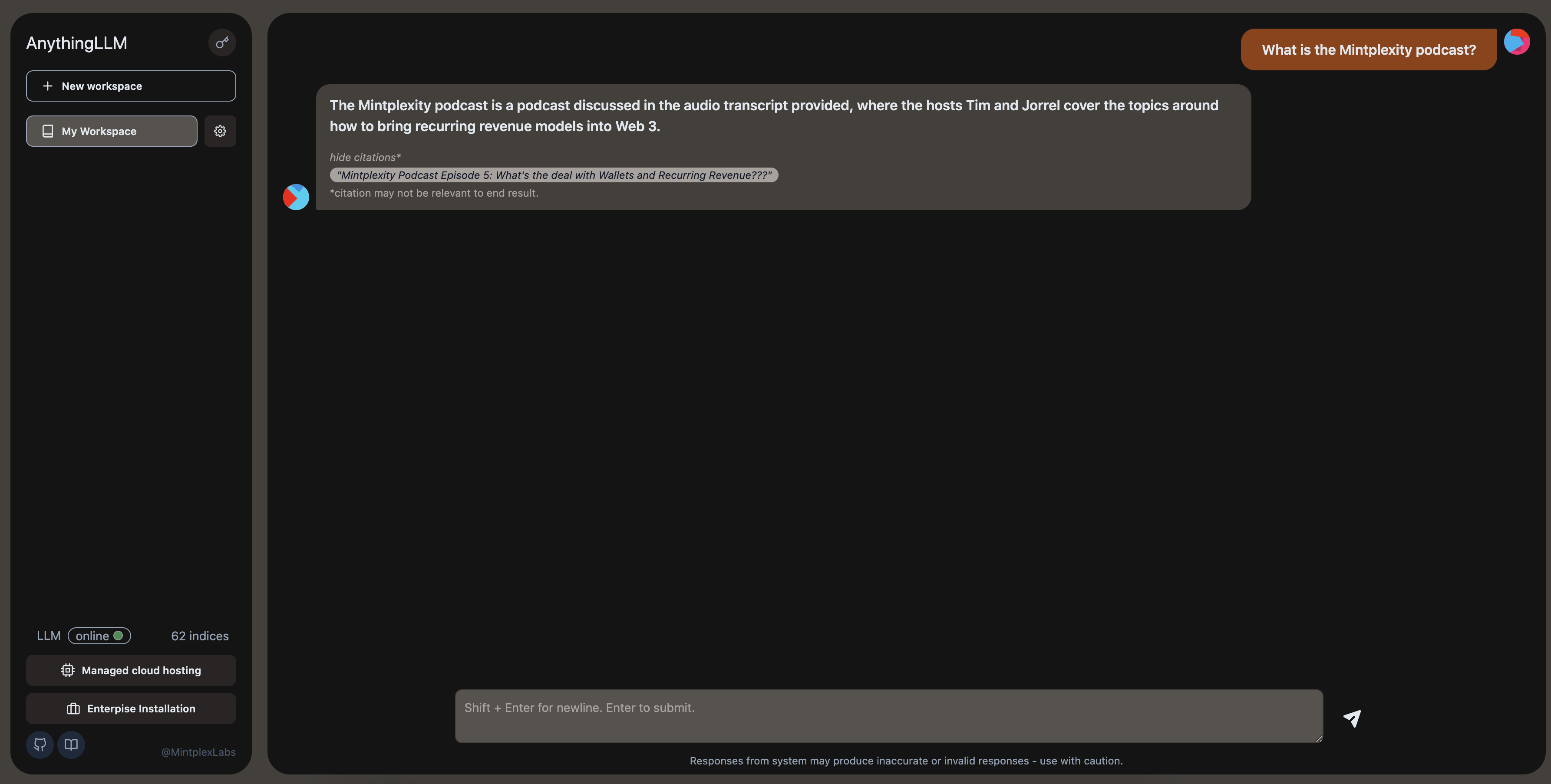

AnythingLLM: A business-compliant document chatbot.

A hyper-efficient and open-source enterprise-ready document chatbot solution for all.

|

A full-stack application that enables you to turn any document, resource, or piece of content into context that any LLM can use as references during chatting. This application allows you to pick and choose which LLM or Vector Database you want to use. Currently this project supports Pinecone, ChromaDB & more for vector storage and OpenAI for LLM/chatting.

Watch the demo!

Product Overview

AnythingLLM aims to be a full-stack application where you can use commercial off-the-shelf LLMs or popular open source LLMs and vectorDB solutions.

Anything LLM is a full-stack product that you can run locally as well as host remotely and be able to chat intelligently with any documents you provide it.

AnythingLLM divides your documents into objects called workspaces. A Workspace functions a lot like a thread, but with the addition of containerization of your documents. Workspaces can share documents, but they do not talk to each other so you can keep your context for each workspace clean.

Some cool features of AnythingLLM

- Multi-user instance support and oversight

- Atomically manage documents in your vector database from a simple UI

- Two chat modes

conversationandquery. Conversation retains previous questions and amendments. Query is simple QA against your documents - Each chat response contains a citation that is linked to the original content

- Simple technology stack for fast iteration

- 100% Cloud deployment ready.

- "Bring your own LLM" model. still in progress - openai support only currently

- Extremely efficient cost-saving measures for managing very large documents. You'll never pay to embed a massive document or transcript more than once. 90% more cost effective than other document chatbot solutions.

Technical Overview

This monorepo consists of three main sections:

collector: Python tools that enable you to quickly convert online resources or local documents into LLM useable format.frontend: A viteJS + React frontend that you can run to easily create and manage all your content the LLM can use.server: A nodeJS + express server to handle all the interactions and do all the vectorDB management and LLM interactions.

Requirements

yarnandnodeon your machinepython3.9+ for running scripts incollector/.- access to an LLM like

GPT-3.5,GPT-4. - a Pinecone.io free account*. *you can use drop in replacements for these. This is just the easiest to get up and running fast. We support multiple vector database providers.

How to get started (Docker - simple setup)

Get up and running in minutes with Docker

How to get started (Development environment)

yarn setupfrom the project root directory.- This will fill in the required

.envfiles you'll need in each of the application sections. Go fill those out before proceeding or else things won't work right.

- This will fill in the required

cd frontend && yarn install && cd ../server && yarn installfrom the project root directory.

To boot the server locally (run commands from root of repo):

- ensure

server/.env.developmentis set and filled out.yarn dev:server

To boot the frontend locally (run commands from root of repo):

- ensure

frontend/.envis set and filled out. - ensure

VITE_API_BASE="http://localhost:3001/api"yarn dev:frontend

Next, you will need some content to embed. This could be a Youtube Channel, Medium articles, local text files, word documents, and the list goes on. This is where you will use the collector/ part of the repo.

Go set up and run collector scripts

Contributing

- create issue

- create PR with branch name format of

<issue number>-<short name> - yee haw let's merge

Telemetry

AnythingLLM by Mintplex Labs Inc contains a telemetry feature that collects anonymous usage information.

Why?

We use this information to help us understand how AnythingLLM is used, to help us prioritize work on new features and bug fixes, and to help us improve AnythingLLM's performance and stability.

Opting out

Set DISABLE_TELEMETRY in your server or docker .env settings to "true" to opt out of telemetry.

DISABLE_TELEMETRY="true"

What do you explicitly track?

We will only track usage details that help us make product and roadmap decisions, specifically:

- Version of your installation

- When a document is added or removed. No information about the document. Just that the event occurred. This gives us an idea of use.

- Type of vector database in use. Let's us know which vector database provider is the most used to prioritize changes when updates arrive for that provider.

- Type of LLM in use. Let's us know the most popular choice and prioritize changes when updates arrive for that provider.

- Chat is sent. This is the most regular "event" and gives us an idea of the daily-activity of this project across all installations. Again, only the event is sent - we have no information on the nature or content of the chat itself.

You can verify these claims by finding all locations Telemetry.sendTelemetry is called. Additionally these events are written to the output log so you can also see the specific data which was sent - if enabled. No IP or other identifying information is collected. The Telemetry provider is PostHog - an open-source telemetry collection service.